The deeper cost is invisible: routine work constantly competing with the judgment and systemic thinking that only experienced QA professionals can provide.

In this article, Oleksii Iven explores what happens when that rhythm breaks — sharing how he designed an AI-powered testing assistant capable of analysing pages, generating test cases from business requirements, executing them through a browser, and producing a full QA report automatically.

This is Part 2 of a three-part series. You can find the other parts below.

- The End of Traditional SDLC: Rethinking QA in the Age of AI (Part 1)

- The End of Traditional SDLC: What the Claude Browser Extension Delivers for QA (Part 3).

- QA isn’t just running tests anymore — it’s designing the systems that run them.

- Routine testing runs itself — from page analysis to full reports — in minutes.

- A 45% pass rate isn’t failure; it’s insight into exactly what’s broken and why.

- AI doesn’t just report numbers — it spots risks, patterns, and next steps before humans do.

- Human expertise turns AI from noise into signal — risk analysis, system thinking, and context matter more than ever.

From test cases to collaboration: rethinking QA in the age of AI

The role of QA is quietly changing — not because of new tools, but because of how we choose to use them. A deeper shift happens when AI is taken seriously as a collaborator rather than a feature. The focus moves away from individual test cases and backlog tasks toward something broader — the patterns and invisible weight that QA carries every day.

Much of that effort goes to routine: interpreting requirements, translating them into scenarios, executing predictable flows, documenting outcomes. None of this work is unimportant. But it constantly competes with the parts of QA that genuinely require human judgment — risk analysis, domain knowledge, the ability to see what a specification does not say.

That tension is where the idea of a QA assistant takes shape. Not a replacement for expertise, and not an abstract experiment — a practical helper designed to absorb context and handle everyday validation tasks, freeing QA to focus on decisions that actually matter.

The concept is ambitious: a system that accepts a URL, analyses the page, generates test cases from business requirements, executes them through a browser, and produces a professional report. All automatic. All in minutes. And built by someone whose background is testing, validation, and quality assurance — not software development.

AI changes the equation. When treated as a collaborator, old role boundaries quietly dissolve.

AI Testing Assistant: The architecture behind the idea

The AI Testing Assistant is what that shift looks like in practice — a system built by a QA team that takes up a URL and business requirements and produces a complete test run on its own. The architecture behind it is straightforward:

- RAG system (LangChain + Milvus) — processing business requirements through vector embeddings, contextual search for relevant information.

- AI engine (Claude API) — page analysis, test case generation, and report insights creation.

- Test execution (Playwright) — browser automation, screenshots at every step, detailed logging.

- Web interface (Flask) — configuration, real-time execution logs, interactive results.

- Reporting engine — HTML reports with visual summaries and AI-generated recommendations.

The system was not designed to replace QA expertise. Without business context, domain understanding, and validation experience, it would generate noise instead of a signal. The intelligence of the output depends entirely on the quality of the thinking behind it.

How it works: step by step

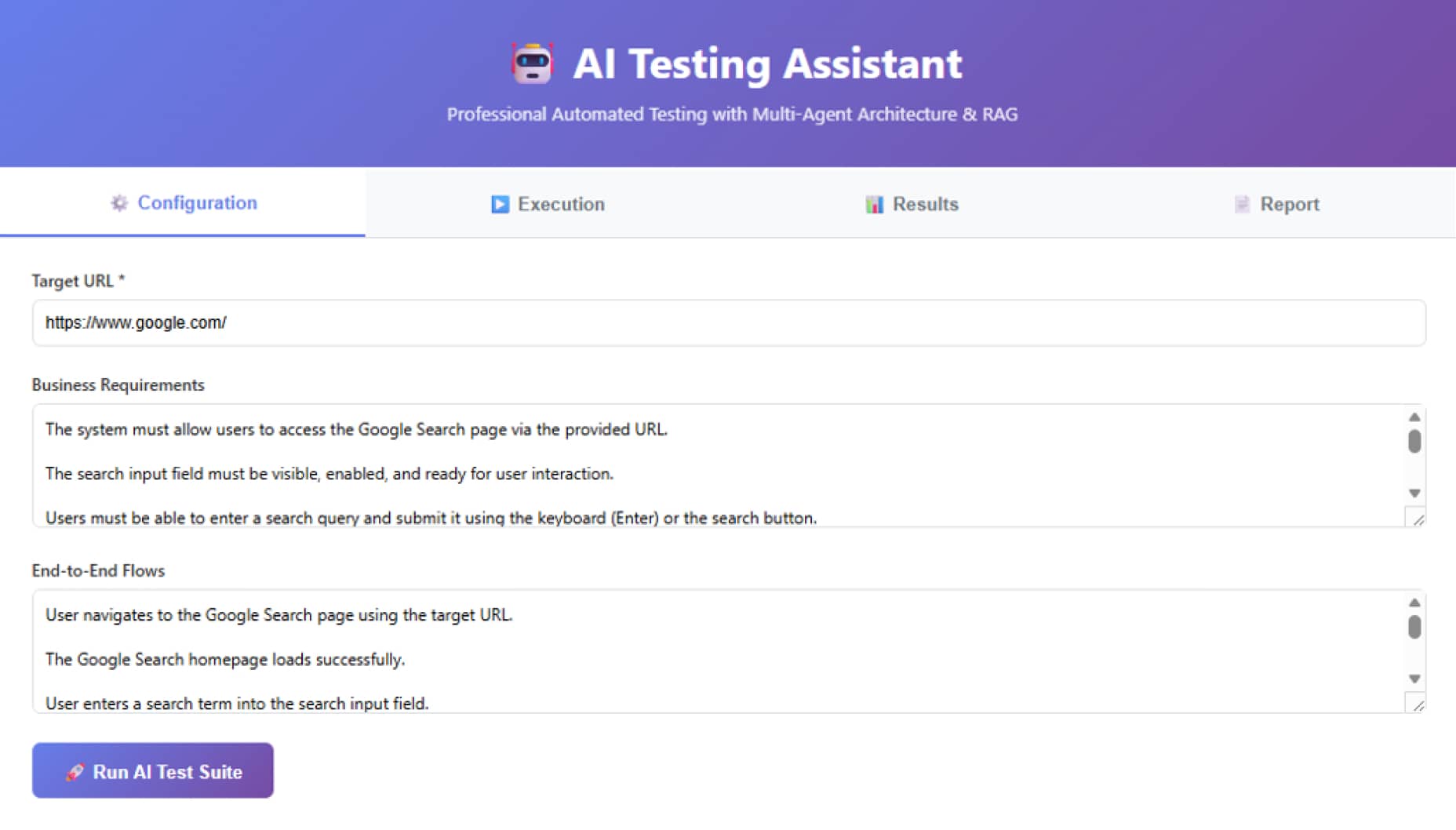

Below is a real test run on Google Search — from raw requirements to a completed report — showing exactly what the system does at each stage.

Business requirements:

- The search input field must be visible, enabled, and ready for user interaction.

- Users must be able to enter a search query and submit it using the keyboard (Enter) or the search button.

- The system must return a list of relevant search results for a valid query.

- Each search result must include a clickable link, title, and brief description.

End-to-end flows:

- User navigates to the Google Search page.

- User enters a search term into the search input field.

- User submits the search request (presses Enter or clicks the search button).

- The system processes the request and displays search results.

AI Testing Assistant — configuration screen

AI Testing Assistant — execution screen

After clicking "Run AI Test Suite", the system moves through five stages automatically:

- Discovery phase — Playwright opens the browser, analyses the DOM structure, and finds all interactive elements (buttons, inputs, links).

- RAG processing — Business requirements are processed through vector embeddings, and the system finds relevant context for test generation.

- Test generation — Claude API receives page structure + requirements + RAG context and generates detailed test cases with specific selectors.

- Execution — Playwright executes each step of each test, takes screenshots, and logs results.

- Reporting — Claude analyses results and generates a professional QA report with insights and recommendations.

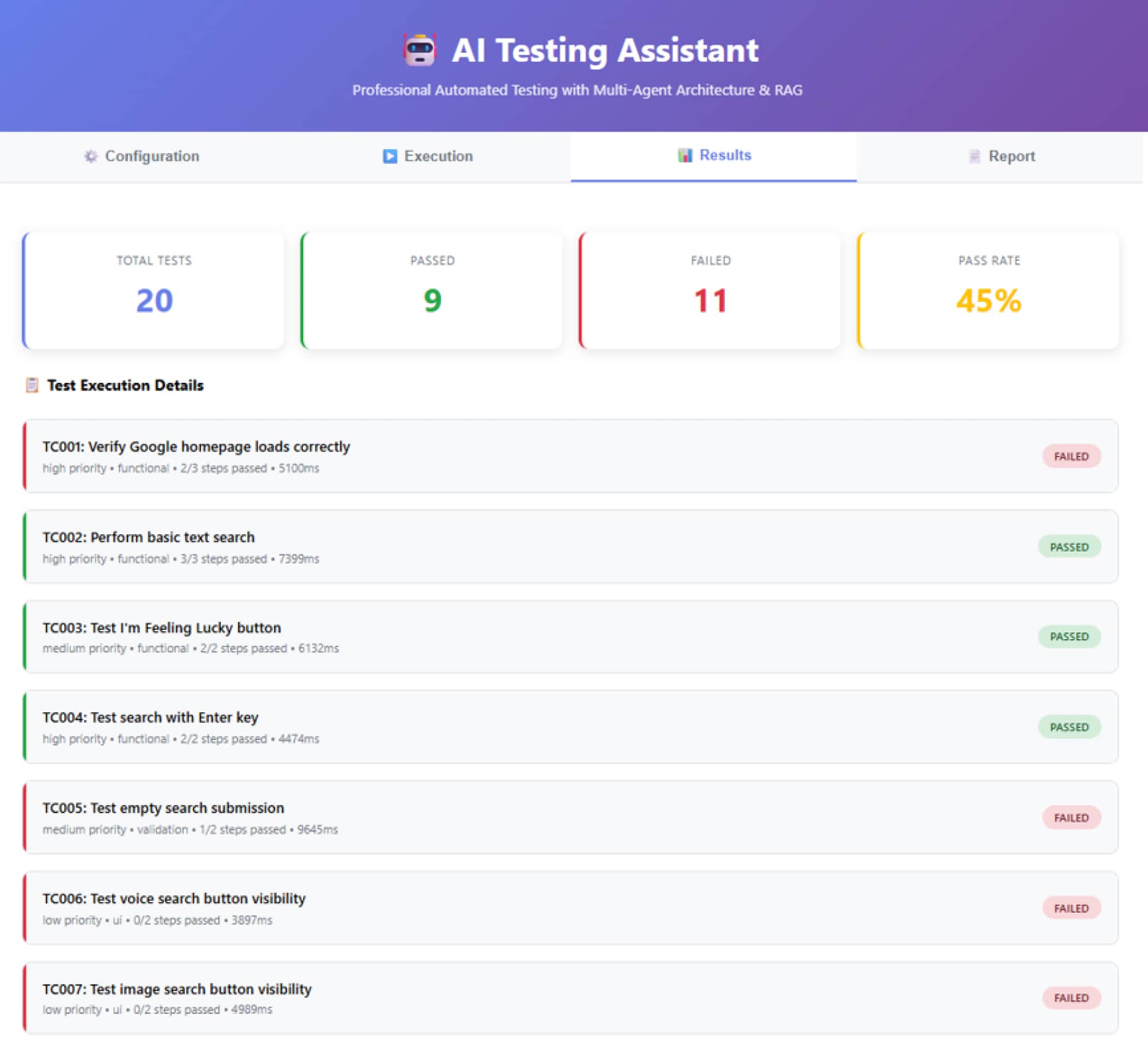

AI Testing Assistant — results screen

The results screen shows the outcome of the full run at a glance:

- 20 test cases were generated automatically from the provided requirements

- 9 passed, 11 failed

- Overall pass rate: 45%

At first glance, it's not impressive; a 45% pass rate isn't a perfect result. But it's a real result from a real test. And most importantly, the system honestly showed what went wrong:

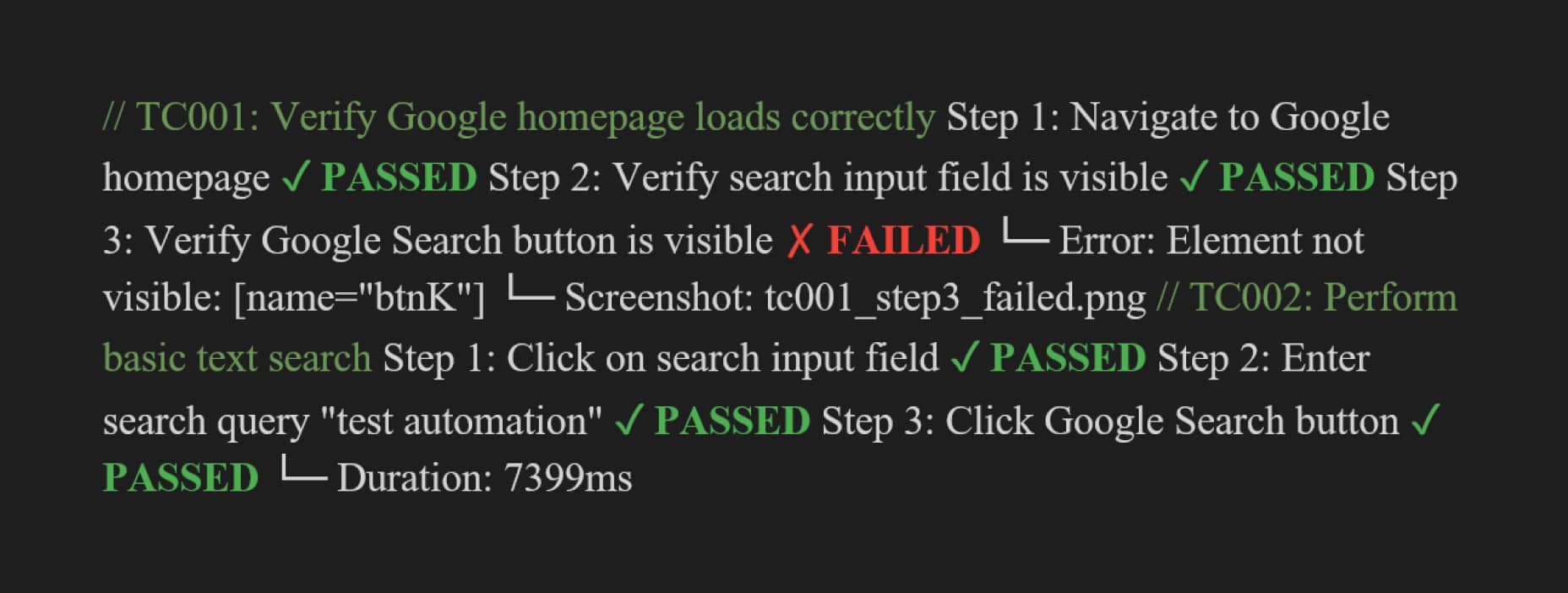

AI Testing Assistant — step-level execution log

Most failures came down to selector issues specific to the localised version of Google — Ukrainian-locale elements carried different attributes than the defaults the system targeted. This is exactly the kind of signal a QA engineer needs: not just that something failed, but why, and where the fix should go. In this case, the path forward is clear — selectors need to be made locale-agnostic before the tests can run reliably across regions.

Below is an example of a successfully generated test case, including detailed steps and a screenshot.

AI Testing Assistant — test case detail view

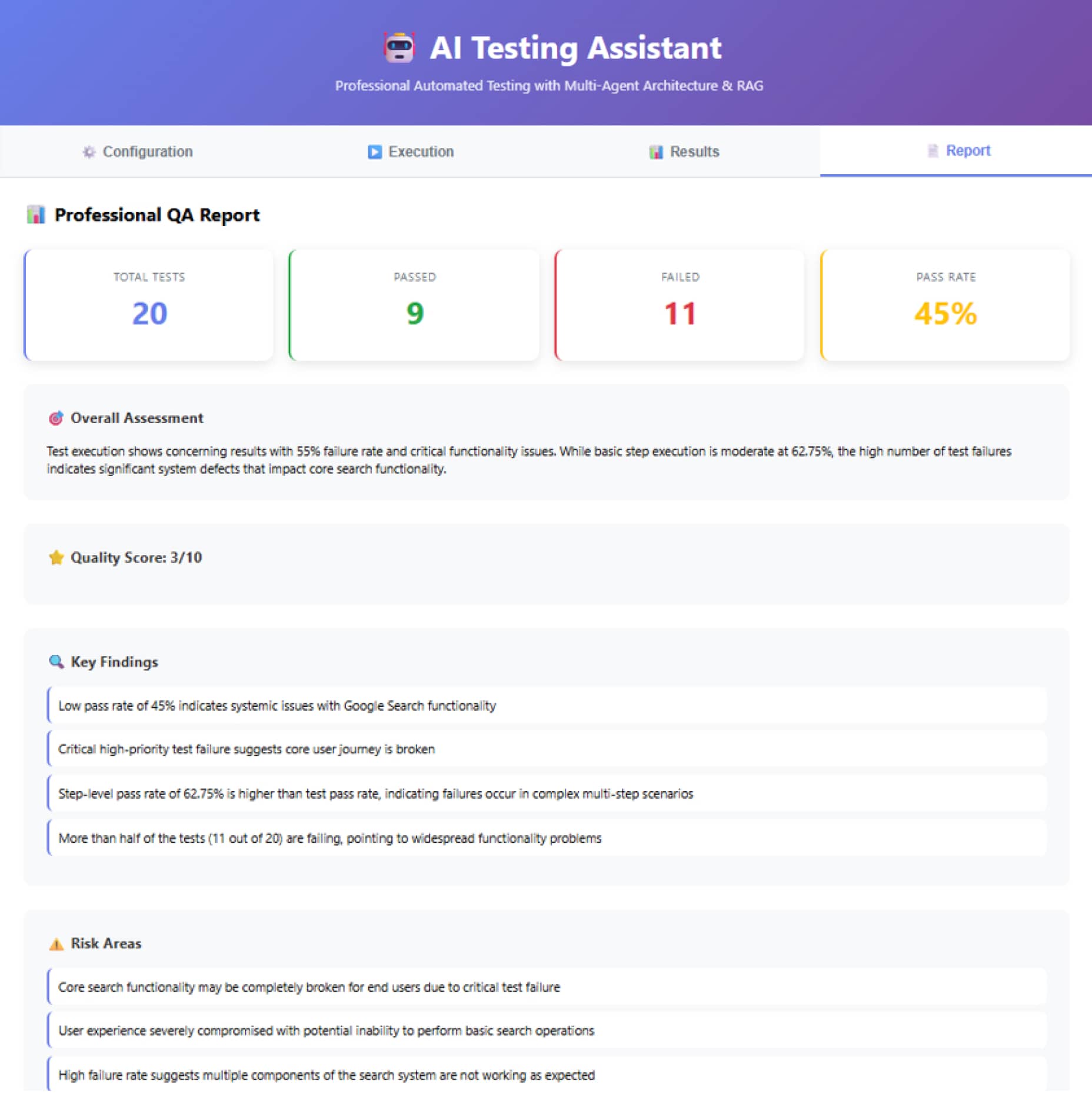

The most interesting part of the report isn't just pass/fail statistics. It's the AI-generated insights:

- A low pass rate of 45% indicates systemic issues with element detection.

- Step-level pass rate (62.75%) is higher than the test pass rate — failures occur in complex multi-step scenarios.

- Critical high-priority test failure suggests localisation-specific selectors needed.

AI Testing Assistant — professional QA report

Here's a key fragment on how the system generates tests:

- Implement locale-agnostic selectors using data-tested or aria-label attributes.

- Add explicit waits for dynamic elements that appear after page interactions.

- Consider halting release until the pass rate improves to at least 80%.

AI Testing Assistant — report detail: risk areas, recommendations, and test coverage

Core test generation function

The fragment above shows how the system generates tests. The full system runs to around 800 lines of Python, not written from scratch, but shaped through architecture decisions and iterative guidance, with Claude generating and refactoring the code throughout. This is what the new QA role looks like in practice: not writing everything independently, but orchestrating AI to build the tools the work requires.

The prototype works, but it is exactly that — a prototype. A production-ready version would need error handling, retry logic, parallel test execution, and CI/CD integration. The goal was to prove the concept. And it does.

How the AI Testing Assistant changes the QA role

The AI Testing Assistant is not the end goal. It is an enabler for a new way of working. Previously, QA was limited by what could be done manually. Now the only limits are imagination and the ability to articulate the task correctly.

A prototype like this would once require a team of developers and months of work. That does not mean QA is getting easier. On the contrary, the bar is rising. When routine is automated, expectations grow. QA is now expected to deliver not just tests, but quality systems.

The tools have changed. The pace has changed. But the goal remains the same: ship products that users can trust. AI just lets us do it faster and at scale.

For years, QA lived inside a familiar rhythm: requirements arrive, test cases follow, execution happens, reports are written. Even when automation entered the picture, the role itself remained largely the same — steps were automated, not thinking. Execution was sped up, but the structure of work stayed untouched.

This prototype breaks that rhythm. It is not simply another automation framework — it is a different way of approaching quality altogether. Instead of manually translating requirements into tests, QA defines how validation should happen and delegates execution to a system built for that purpose. Instead of validating what others have built, QA moves upstream and starts shaping the validation logic itself.

Just yesterday, QA was expected to run tests. Today, QA can create a tool that runs them.

The bottom line

Building this system required no development background — only the ability to think clearly about quality and articulate the task correctly. Artificial intelligence does not invent that expertise and does not replace QA judgment.

The question for QA is no longer "Did we test enough?" It is "Can this system reliably test what matters?" That is harder work. But it is also more meaningful.

Curious what happens when AI becomes a constant presence inside the browser? That is exactly what Part 3 of this series is about.

FAQs

AI in QA automates test case generation, execution, and reporting. It detects defects and anomalies while highlighting root causes. AI provides insights, risk analysis, and actionable recommendations beyond pass/fail results. It also monitors AI systems to ensure reliability, accuracy, and data integrity over time.

Quality assurance in artificial intelligence (AI) checks that that AI systems remain reliable and accurate. It verifies that these technologies uphold ethical standards throughout their entire lifecycle. Unlike traditional software testing, AI quality assurance emphasises data integrity and evaluates machine learning model performance. The process aims to identify and prevent algorithmic bias. Rigorous testing is conducted to ensure the system safely handles unexpected inputs. Following deployment, continuous monitoring is implemented to detect any decline in model accuracy over time.

Related Insights

Inconsistencies may occur.

The breadth of knowledge and understanding that ELEKS has within its walls allows us to leverage that expertise to make superior deliverables for our customers. When you work with ELEKS, you are working with the top 1% of the aptitude and engineering excellence of the whole country.

Right from the start, we really liked ELEKS’ commitment and engagement. They came to us with their best people to try to understand our context, our business idea, and developed the first prototype with us. They were very professional and very customer oriented. I think, without ELEKS it probably would not have been possible to have such a successful product in such a short period of time.

ELEKS has been involved in the development of a number of our consumer-facing websites and mobile applications that allow our customers to easily track their shipments, get the information they need as well as stay in touch with us. We’ve appreciated the level of ELEKS’ expertise, responsiveness and attention to details.